I have to say that whilst this forum has many advantages and is great for Hackintosh advice, its not the first place I would come for advice on clustering or on building machines with more than 32 cores or 6TB of RAM.

I have designed, built and run reasonable sized clusters, my largest was 54 machines each with four Intel Xeon CPU's each with four cores, 32GB RAM, 73GB disk, 3 p-Series servers for databases and a very large disk array, 30TB comes to mind which was a lot when we built this around 6-7 years ago. Our biggest issue was cooling the racks down as the stuff was housed in a secure computing centre and the amount of heat the racks put out completely blew out the 'normal' cooling system. Since we had concrete walls, floors and ceilings we couldn't just run pipes out the windows

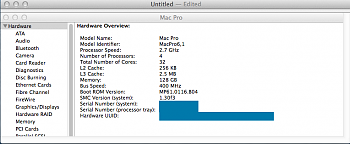

I am not that up to date these days but the last time I looked, Mac OS X wasn't really designed for high performance computing. It appears to handle 128GB and might handle more but apparently there is a limitation of 32 cores on the OS and stuff like that.

Everybody (ok, almost everybody), uses Linux to do high performance computing now. I used to work for IBM and most of our high performance kit was Linux based, though we did get some great performance out of P-Series and zOS, its expensive compared to Linux and simply not as flexible. Have a look at the top 500 super computer charts and see whats in there. When we did our big rack we would have scraped in at around 1,200 or so. Not much of a claim to fame

If your problem requires more than 32 cores and apparently 6TB of RAM then you need to look outside of this forum and into what your software supports. I'd be very, very surprised if your software was Mac OS X only. Also if you're writing software then I would also look at a Linux cluster, you can scale out easily, there's masses of software to support clustering and its now pretty much mainstream.

If you can afford 6TB of RAM you afford a high performance clustering consultant to advise you

Rob